- Home

- About

- Contact

- Network file sharing in vista

- How can someone intercept my at and t samsung text messages

- Stb emu pro

- Is 16g enough memory for video editing on macbook pro

- Western digital my passport 1tb wdbyvg0010bbk

- Grant proposal for gaussian software

- Forms of acid relux

- How to set gif as wallpaper in intel xdk

- Turbotax deluxe 2018 torrent download

- Watch full movie frozen fever free with out doing code

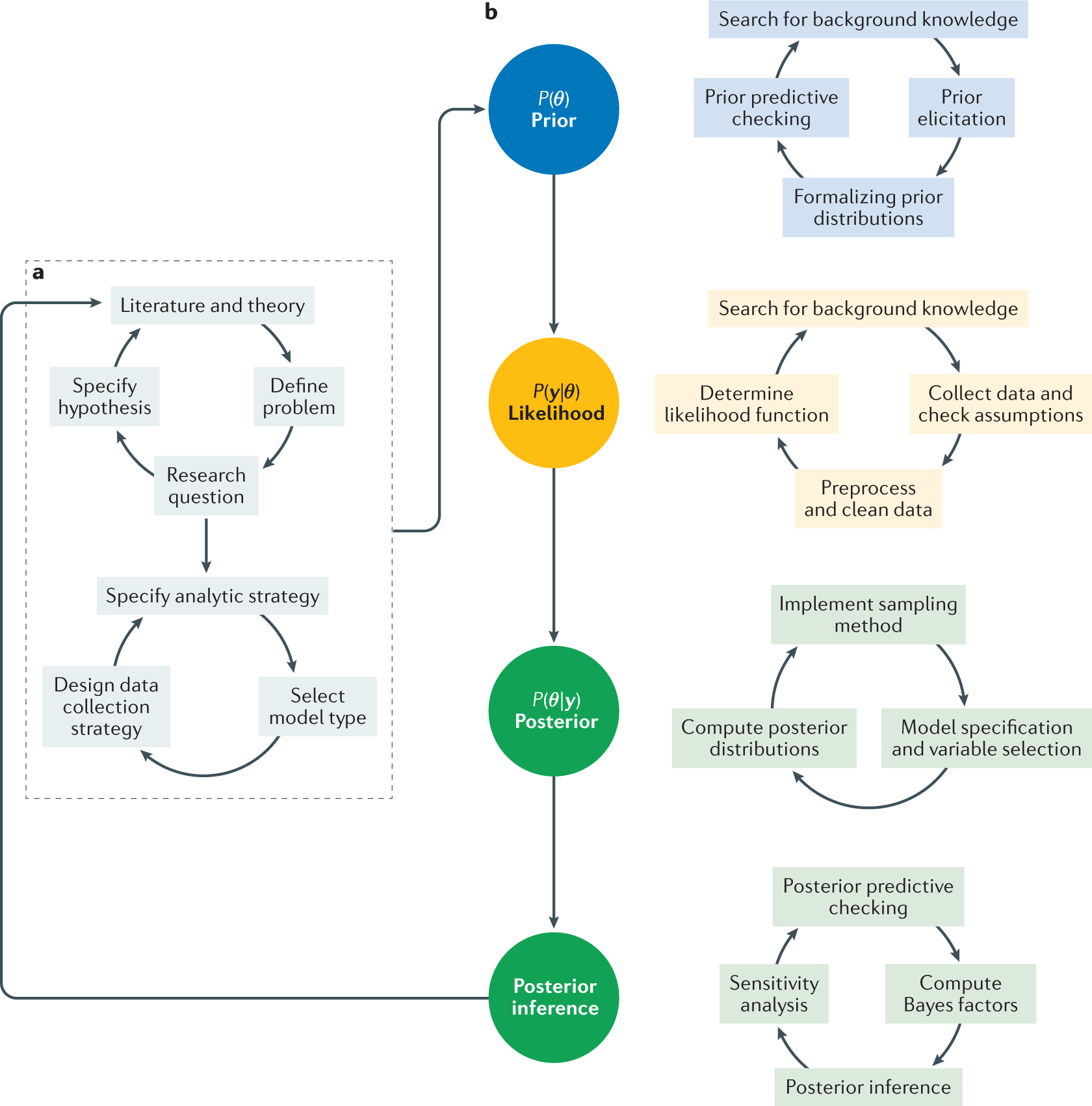

In this paper, the effects and performance of the variational approximation are studied in illustrative examples based on simulated and real data. Moreover, in the variational expectation-maximization (VEM) setting, crucial hyperparameters determining the noise level and smoothness of the latent functions are fixed to approximate maximum marginal likelihood (ML) estimates, obtained by optimizing the variational lower bound to the marginal likelihood. When correlations between the latent variables (within variational factorisations) are large, and when these factors are over a non-trivial number of dimensions, this variational approximation becomes increasingly poor. Indeed, variational methods make strong assumptions of independence, on the forms of distributions and, dependent on the choice of divergence, they necessarily under- or over-estimate the variance (Blei et al. A major motivation for developing this method is the need to quantify hyperparameter uncertainty, and more accurately quantify latent uncertainty. A method that can break these correlations is required, which we obtain by using a pseudo-marginal scheme that approximately integrates out the latent variables. Towards this end, it is natural to explore the posterior distribution of the hyperparameters and latent variables with Markov Chain Monte Carlo (MCMC), but strong correlations between the hyperparameters and latent variables leads to low efficiency and poor mixing (Betancourt and Girolami 2015). Our contribution is a novel framework for robust, fully Bayesian inference for the sGPLVM. ( 2011), in which the only input is time, using a variational expectation maximization (VEM) approach to determine point estimates of the hyperparameters. This model was studied in a dynamical setting in Damianou et al. In this paper we consider the supervised version (sGPLVM) of the GPLVM, in which a GP prior is placed over latent variables indexed by known and observable inputs. To capture uncertainty in the latent variables, Titsias and Lawrence ( 2010) developed a variational method for GPLVMs.

In Lawrence ( 2005), a Gaussian prior is placed over the latent variables, which are optimized according to their maximum a-posteriori (MAP) estimates (equivalent to the maximum likelihood estimates with \(L_2\) regularisation). The model places independent GP priors over the mappings from the latent space to each component in the observed output space. The Gaussian process latent variable model (GPLVM) introduced in Lawrence ( 2004) is a hierarchical model originally used to extend GPs to the (unsupervised) learning task of nonlinear dimension reduction, in which the inputs are unobserved latent variables. The freedom to choose from a range of possible kernel functions introduces a degree of flexibility in the assumed complexity and smoothness of this underlying target function. A GP is fully specified by a mean function and a symmetric positive-definite covariance (kernel) function, which encapsulate any a-priori knowledge and/or assumptions in relation to the target function. A realisation of the GP is a deterministic function of the index variable. A GP is a family of random variables (with a common underlying probability space) ranging over an index set, such that any finite subset of the random variables has a joint Gaussian distribution with consistent parameters. In Gaussian process (GP) model approaches, the latent function is assumed to be a realisation of a Gaussian stochastic process. Statistical Bayesian approaches to regression can be used to model nonlinear functions between inputs and outputs in a simple, flexible, nonparametric and probabilistic manner.